The Missing Primitives for Trustworthy AI Agents

This is the final major installment in the Trustworthy AI series.

Before this, we established all the mechanical and architectural safety foundations:

- Part 0 - Introduction

- Part 1 - End-to-End Encryption

- Part 2 - Prompt Injection Protection

- Part 3 - Agent Identity and Attestation

- Part 4 - Policy-as-Code Enforcement

- Part 5 - Verifiable Audit Logs

- Part 6 - Kill Switches and Circuit Breakers

- Part 7 - Adversarial Robustness

- Part 8 - Deterministic Replay

- Part 9 - Formal Verification of Constraints

- Part 10 - Secure Multi-Agent Protocols

- Part 11 - Agent Lifecycle Management

- Part 12 - Resource Governance

- Part 13 - Distributed Agent Orchestration

- Part 14 - Secure Memory Governance

- Part 15 - Agent-Native Observability

- Part 16 - Human-in-the-Loop Governance

- Part 17 - Conclusion (Operational Risk Modeling)

With all technical controls in place, one primitive remains, the one that cannot be automated by design: Human-in-the-loop governance. This is the explicit insertion of humans into autonomous decision cycles.

Perhaps the ultimate safety primitive. It is the mechanism through which organizations exert judgment, enforce accountability, control risk, and maintain operational authority over agent systems.

Humans as the Final Safety Boundary (Part 16)

Autonomous agents will eventually encounter situations where:

- rules conflict,

- the model expresses uncertainty,

- retrieved memories are ambiguous,

- versions diverge,

- latent bias influences decisions,

- or the consequences exceed an acceptable risk threshold.

No matter how strong the surrounding technical safety architecture is, humans remain the only source of contextual understanding, ethical reasoning, and institutional accountability.

Why We Need HITL

Human involvement is essential for two fundamental reasons.

Judgment and Ethics

Agents excel at pattern completion, prediction, and action sequencing.

But they have no grounding in human values, organizational duty, or ethical nuance. They cannot evaluate whether an action is socially appropriate, reputationally harmful, or morally unacceptable. They cannot adjudicate fairness or weigh trade-offs that require interpretation of law, policy, culture, or context. Human oversight introduces intentionality - the uniquely human ability to understand when rules should be applied strictly, flexibly, or not at all.

Accountability and Operational Control

Organizations must be able to trace responsibility for decisions.

Humans remain accountable for risk acceptance, policy exceptions, and the consequences of system actions. When agents malfunction, humans must override, intervene, or suspend them. HITL provides structured escalation paths, reviewer authority, and well-defined handoffs between automated autonomy and human control. It transforms agent systems from closed, self-determining loops into governable systems aligned with organizational responsibility.

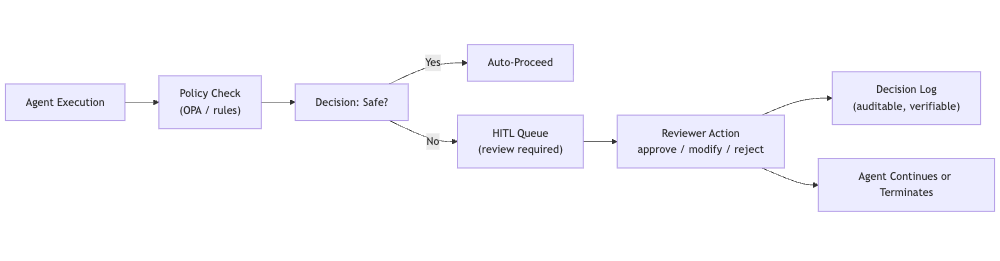

Diagram: HITL Oversight Architecture

Before diving into the primitives, it is helpful to visualize the control bottleneck that governs all high-risk agent actions. The diagram below illustrates how an agent’s decision flow must pass through a single, enforceable checkpoint where automated policy meets human oversight.

This flow forces autonomy to yield to governance whenever required.

It ensures that decisions leave the agent boundary and enter a structured, auditable human workflow, creating a chain of accountability impossible to achieve with automation alone.

Primitive 1: Approval Gates (Pre-Action Checks)

Approval Gates are deliberate intervention points - formal breakpoints in agent execution where autonomous action is suspended until a human explicitly authorizes the next step. These gates function as runtime contractual boundaries: the agent proposes an action, but execution halts pending human approval.

Approval gates are not arbitrary; they are triggered by signals generated by the Observability Stack (Part 15) and Formal Verification (Part 9). These include:

- policy-as-code violations or uncertainty,

- divergence spikes between agent versions,

- anomalous memory influence patterns,

- deviations in workflow lineage,

- high-risk tool invocation,

- or any formal invariant likely to be violated.

In practice, approval gates turn latent risk indicators into actionable checkpoints. Instead of allowing the agent to continue blindly, the orchestrator pauses and routes context-rich decision summaries to a human reviewer. A human then approves, rejects, modifies, or escalates the decision - creating a controlled autonomy model.

Primitive 2: Break-Glass Procedures (Emergency Human Override)

Even with approval gates, agents can enter harmful states: unsafe loops, escalating retries, corrupted memory regions, or runaway behaviors. Break-glass procedures exist to immediately seize control in these emergency conditions.

A break-glass event triggers a protocol that includes:

- Halting all agent activity.

- Revoking credentials (Part 3).

- Freezing memory layers for forensic analysis (Part 14).

- Capturing a deterministic replay snapshot (Part 8).

- Logging an immutable audit record (Part 5).

- Routing the incident to a human operator.

- Preventing resumption until explicit approval is granted.

Break-glass operations are not everyday tools - they are the equivalent of pulling a fire alarm. They exist for catastrophic scenarios where automated systems cannot safely recover on their own.

Primitive 3: Reviewer Workflows (Separation of Duties)

Reviewer workflows formalize who reviews decisions and how the review process should operate. This requires strong separation of duties, ensuring no single role has unilateral authority over high-risk decisions.

A typical separation includes:

- Primary Reviewers, who handle routine or low-risk decisions,

- Compliance Reviewers, responsible for regulated domains,

- Security Reviewers, who assess identity, memory, and data risks,

- Executive Approvers, who accept risk on behalf of the organization.

Reviewer workflows follow a governance lifecycle:

Triage → Review → Annotate → Approve/Reject → Escalate → Log

- Triage determines who should handle the decision.

- Reviewers examine provenance, semantic traces, memory influence, and policy context.

- Annotations capture rationale and add human intent to the decision record.

- Approval or rejection finalizes the decision.

- Escalation elevates high-risk or unclear cases to higher authority.

- Finally, every step is logged in a verifiable audit structure.

This transforms human oversight from “someone glances at the output” into a structured, accountable digital governance process.

Primitive 4: Human-Graded Policy Exceptions

Policies cannot predict every scenario.

Sometimes the safest or most ethical action is to intentionally violate a rule - but only if done through a controlled process.

Human reviewers must be able to:

- request a policy exception,

- document justification,

- evaluate the blast radius of deviation,

- timestamp and sign the exception,

- and specify duration or boundary limits.

Policy exceptions must be transparent, traceable, and reversible. They integrate into the organization’s governance model and form part of the audit lineage.

This systematic handling of exceptions prevents informal “side-channel approvals” and ensures all deviations are reasoned, documented, and compliant.

Primitive 5: Accountable Decision Logs

Accountable decision logs form the final, immutable chain of custody for agent actions. These logs must establish a clear mapping between:

- agent behavior,

- the human decision that shaped it,

- the rationale for that decision,

- and the exact context in which the decision occurred.

Crucially, the human’s decision - approve, reject, modify - is the final event in the agent’s trace (Part 15). This event must be cryptographically linked to:

- the Verifiable Audit Log (Part 5),

- the Semantic Trace Event (Part 15),

- and the provenance chain.

Decision logs serve as the authoritative evidence in compliance reviews, incident investigations, and risk audits. They form the backbone of accountable AI governance.

Primitive 6: Human-Operated Rollback & Detonation Mechanisms

When a system - level fault is detected - model drift, memory poisoning, corrupted DAGs, or divergence events - humans must be able to roll back agents to a safer version or detonate faulty workflows entirely.

Human-operated rollback is not merely reverting a model; it encompasses:

- restoring stable memory states,

- switching to pre-validated agent versions (Part 11),

- freezing risky tools,

- routing traffic away from compromised workflows.

Detonation goes further, allowing the operator to terminate all autonomous actions for a given tenant, tool, or agent cluster.

These controls turn human authority into a tangible system-level safety mechanism.

Primitive 7: Incorporating Human Feedback Into Future Behavior

Human-in-the-loop decisions should not disappear after use. They must form part of a continuous governance and improvement cycle.

This happens through what is traditionally known as the Reinforcement Learning From Human Feedback (RLHF) loop.

In the context of autonomous agents, RLHF expands to incorporate:

- reviewer corrections,

- exception rationales,

- risk escalation patterns,

- rejection justifications,

- and approval annotations.

These human insights flow back into:

- policy rules (Part 4),

- agent version constraints (Part 11),

- routing heuristics (Part 13),

- formal invariants (Part 9),

- safety checks and approval gate logic,

- and memory governance signals (Part 14).

This makes HITL not only a safety boundary but also a knowledge input for safer future behavior.

Why This Matters

All prior safety primitives - identity, attestation, policies, audit, observability, memory governance, orchestration - are necessary but not sufficient. They control the system’s mechanics but cannot replace human judgment, accountability, and ethical decision-making.

Human-in-the-Loop Governance is the final layer of trust:

- It ensures that autonomy does not override institutional responsibility.

- It turns semantic observability into actionable decisions.

- It prevents catastrophic drift, abuse, or unintended consequences.

- It provides a formal process for intervention, override, and risk acceptance.

- It binds human accountability to autonomous behavior in a verifiable trace.

HITL closes the loop, transforming agent systems from autonomous actors into governed, auditable, and responsible systems aligned with human and organizational values.

Practical Next Steps

- Define high-risk actions requiring human approval.

- Implement policy triggers for review gates.

- Build reviewer workflows with clear role separation.

- Integrate break-glass emergency procedures.

- Use verifiable logs for decision lineage.

- Connect HITL events to provenance and semantic traces.

- Use deterministic replay for post-decision forensics.

- Add RLHF-based governance learning loops.

- Treat HITL as a first-class subsystem, not a sideline feature.

Human oversight is not a relic of pre-automation - it is the keystone that makes autonomous systems safe, governable, and trustworthy.