The Missing Primitives for Trustworthy AI Agents

This installment continues our exploration of the primitives required to build predictable, safe, production-grade AI systems:

- Part 0 - Introduction

- Part 1 - End-to-End Encryption

- Part 2 - Prompt Injection Protection

- Part 3 - Agent Identity and Attestation

- Part 4 - Policy-as-Code Enforcement

- Part 5 - Verifiable Audit Logs

- Part 6 - Kill Switches and Circuit Breakers

- Part 7 - Adversarial Robustness

- Part 8 - Deterministic Replay

- Part 9 - Formal Verification of Constraints

- Part 10 - Secure Multi-Agent Protocols

- Part 11 - Agent Lifecycle Management

- Part 12 - Resource Governance

- Part 13 - Distributed Agent Orchestration

- Part 14 - Secure Memory Governance

- Part 15 - Agent-Native Observability

- Part 16 - Human-in-the-Loop Governance

- Part 17 - Conclusion (Operational Risk Modeling)

Agent-Native Observability (Part 15)

Modern agent systems produce behavior, not just responses.

This behavior spans:

- planning

- decomposition

- multi-agent collaboration

- memory accesses

- tool sequencing

- retries and reconsiderations

- evolving workflows

- differing reasoning paths between versions

Traditional logs and metrics can tell you what happened, but never why it happened, or what the agent believed was happening.

Agent-Native Observability adds semantic-level visibility over:

- reasoning

- workflow structure

- tool usage

- memory influence

- version divergence

- agent-to-agent communication

- provenance of every decision

This is the only way to debug, trust, and safely operate agents in production.

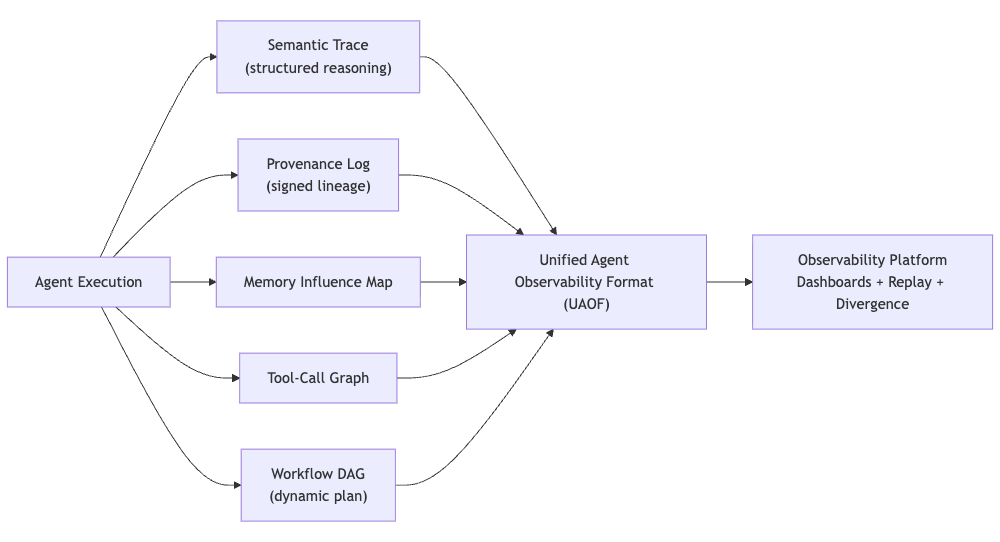

Diagram: Agent-Native Observability Architecture

Primitive 1: Semantic Reasoning Traces

An agent’s internal reasoning is the root cause of its decisions. But raw chain-of-thought is:

- insecure

- privacy-sensitive

- potentially leaky

- non-deterministic

- unstructured

- not suitable for storage or audit

Instead, we capture structured semantic traces - a redacted, normalized version of the agent’s reasoning that describes:

- the intent behind an action

- the type of step (plan refinement, tool call, memory access)

- timing information

- the agent version

- a reference to the Verifiable Audit Log (Part 5)

- a stable semantic representation, not free-form text

This provides interpretability without exposing sensitive thoughts.

Example: Structured Semantic Trace Event

from typing import Dict, Any

from dataclasses import dataclass

@dataclass

class SemanticTraceStep:

"""Represents one logical reasoning step for observability."""

runid: str

stepindex: int

agentid: str

agentversion: str

intent: str # e.g., "Tool selection", "Summarization", "Plan modification"

actiontype: str # e.g., "llmcall", "memoryread", "toolexecution"

durationms: int

auditlogref: str # Link to corresponding Verifiable Audit Log entry

Example JSON output

{

"runid": "task-2487",

"stepindex": 3,

"agentid": "retrieval-agent",

"agentversion": "v4.2.1",

"intent": "memorylookup",

"actiontype": "memoryread",

"durationms": 42,

"auditlogref": "audit:task-2487:step3"

}

This is how we bridge internal reasoning (semantic) with external accountability (audit logs).

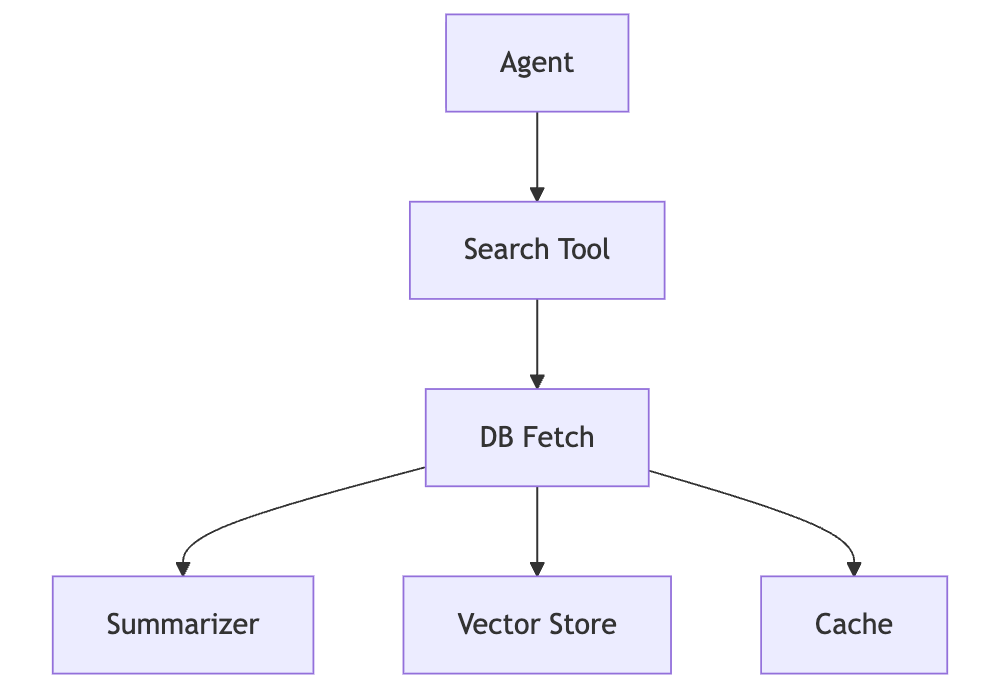

Primitive 2: Tool-Call Graphs & Execution Trees

Agents increasingly behave as orchestration layers over tools.

Without tool-call graphs, we cannot see:

- repeated tool thrashing

- unauthorized or unsafe tool usage

- recursion loops

- bottlenecks

- cascading failures

- version-induced tool behavior differences

Tool-call graphs convert messy logs into structured execution trees.

Diagram: Tool-Call Graph (Conceptual)

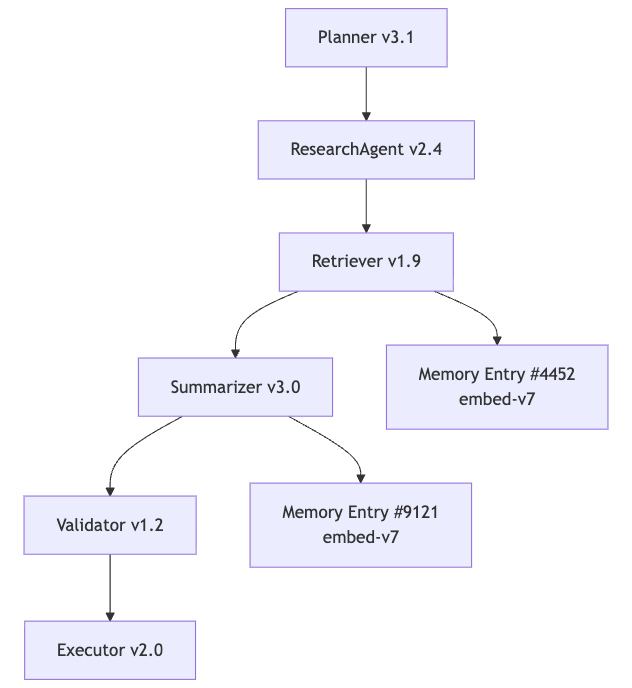

Primitive 3: Multi-Agent Workflow Provenance (Integrated Into Observability)

Provenance is the data structure that makes Observability work.

It answers:

- Who produced each step?

- Which version of the agent?

- What memory entries influenced it?

- Which tools were invoked?

- Where did the retrieved content originate?

- How did this step connect to prior steps?

Provenance is a cryptographically signed, append-only, structured timeline correlating:

- agents

- tools

- memory slices

- workflow nodes

- version identifiers

- policies applied

It is the semantic analog of a distributed trace.

Diagram: Multi-Agent Provenance Graph

Primitive 4: Divergence Analysis (Version vs Version)

Divergence analysis detects when a new agent version behaves differently than the previous one.

It answers:

- Does the new version produce the same plan structure?

- Does it choose the same tools?

- Does it reference the same memory slices?

- Do retrieved embeddings differ significantly?

- Does the workflow DAG reshape?

- Does latency or cost change?

This is essential for:

- shadow execution

- canaries

- rollout gates

- regression detection

- safety controls

Primitive 5: Memory Influence Tracing

Memory is often the true cause of agent behavior.

Influence tracing shows:

- which memory entries were retrieved

- their similarity scores

- embeddings used

- which agent originally wrote the data

- under which schema version

- whether the retrieved memory passed poisoning checks

Memory influence is necessary to debug:

- hallucinations

- drift

- stale answers

- subtle contamination

- cross-tenant leakage

This directly builds on Part 14.

Primitive 6: Workflow Lineage (Semantic DAGs)

Every multi-agent execution is a DAG - but not the kind statically defined in Airflow or Kubeflow.

Agent DAGs are:

- generated at runtime

- version-dependent

- memory-influenced

- plan-driven

- dynamically reshaped after retries

- capable of branching and speculative paths

Lineage reconstructs:

- the full semantic DAG

- intermediate states

- parent-child relationships

- the tool and memory influences

- final outputs and costs

Without lineage, debugging agents is guesswork.

Primitive 7: Unified Agent Observability Format (UAOF)

UAOF merges all observability primitives into a single format:

- semantic reasoning traces

- provenance

- memory influence

- workflow DAGs

- tool-call graphs

- divergence metrics

- policy events

- resource governance events

UAOF enables:

- deterministic replay (Part 8)

- formal verification integration (Part 9)

- policy enforcement visibility (Part 4)

- safety rollouts (Part 11)

- orchestration-level observability (Part 13)

- memory governance linkage (Part 14)

UAOF is how observability becomes the foundation of trustworthy AI.

Why Traditional Observability Fails for Agents

Traditional observability assumes deterministic paths, predictable interfaces, and stable execution graphs. Agents violate every one of those assumptions. Their workflows emerge dynamically, shaped by memory, model version, tool outputs, and internal reasoning steps.

Traditional traces map a highway - a fixed, known path.

Agent observability must map a dynamic river delta - the path is fluid, self-determined, and constantly reshaping its own environment.

Why This Matters

Agent systems fail silently, confidently, and often nondeterministically. Without semantic observability - traces, DAGs, provenance, influence maps - you cannot debug or govern them.

These primitives give operators visibility into intent, not just infrastructure. They expose decision paths, not just metrics. They reveal semantic drift, not just error codes.

And most importantly, they form the actionable foundation for Part 16: Human-in-the-Loop Governance, where human judgment, approvals, overrides, and investigations are layered on top of the semantic telemetry created by Agent-Native Observability.

Practical Next Steps

- Capture structured semantic traces for every agent run

- Instrument tool calls as graph edges

- Build provenance logs with cryptographic links

- Record memory influence slices

- Generate workflow lineage DAGs

- Run divergence tests during deployment

- Define a Unified Agent Observability Format

- Integrate observability with orchestrator routing and replay

Observability is the nervous system that lets us understand, trust, and control intelligent systems.